The Most Profitable Algorithm

When will we see the first truly autonomous profit-maximising algorithms? As we approach an era where artificial intelligence can clone professional expertise across industries, the question of algorithmic entrepreneurship becomes increasingly relevant.

Part I: Perfect Profit

Technology companies are cloning the domain expertise of professionals in industries like law, finance, marketing, transport and medicine [1]. PhD theses are being accelerated with Co-Scientist and approached outright with Deep Research [2].

Imagine you are tasked to design a benchmark that, if solved, would enable perfect value creation.

Schumpeter’s “creative destruction” describes the process by which new innovations destroy established enterprises and business models. Historically, this change has occured over generations. With reasoning models, there is scope to accelerate this process and better internalise the burden consumers pay when innovation is slow.

In theory, the marginal cost of production in competitive industries falls over time until consumers can take the cost of any good for granted. We’ve seen this play out with the real price of goods from supermarkets [3], the real cost of energy from solar [4], the real cost of computing [5], and the real cost of transport [6]. This pattern suggests that in sufficiently free markets, declining costs should occur universally. even for property markets with new builds stabilising prices.

The key factor enabling this universal trend is the presence of competitive market conditions that allow natural cost efficiencies to develop and prices to adjust accordingly over time. The freer markets can be, the faster we can enter a world of material abundance.

Moravec’s Landscape of Human Competence and the Great Flood

Moravec’s Landscape maps human cognitive capabilities against machine abilities, symbolised by the waterline, slowly rising and destined to one day consume all tasks. Once, clerks and bank tellers were prestigeous roles, demanding a degree and an expensive salary. Now, Clerk is a SaaS app and a bank teller has, for the entire duration of my life, referred most notably to the ATM. Needless to say, these skills of arithmetic, along with translation, chess, Poker and Go are now firmly submerged beneath the waterline.

Moravec’s Paradox reveals that contrary to traditional assumptions, high-level reasoning tasks are often easier for machines to master than basic sensorimotor skills that even toddlers possess. Hard skills for machines have, up until recently, included facial recognition, catching a ball, or recognising a voice. The peaks of Moravec’s original illustration were the great arts: art, cinematography, science and writing books. These are not solved problems by any means, but with modern diffusion products like Midjourney and Runway, artists are forced to confront what these disciplines will look like when it no longer requires a small army to put an idea to screen.

Encroaching on artistry leaves a sour taste in the mouth for those who believe that human creativity is sacred. But how do these folk react to big corporates, who price gouge and hike prices in the name of profit?

Few consider the technical implications of automating entrepreneurship itself, which may represent the most meaningful role for artificial intelligence when it comes to improving the lives of normal people.

Who believes that investment banking, tax accounting, or corporate legal compliance are sacred arts that must be maintained in the fashion they are? There are a substantive set of reasons to consider the implications of AI-driven entrepreneurship:

First, as AI automates production processes, previously scarce resources will become increasingly abundant and affordable as the marginal cost of entrepreneurship tends to zero. The real cost of computation has declined by over 99.9% since the 1950s [8], suggesting a pattern that could extend to all goods and services affected by computation. This will enable material abundance—and for those who benefit from UBI, time and creativity will become the only currencies.

Second, our markets favor economies of scale, leaving specialised needs underserved. For example, fewer than 5% of rare diseases have FDA-approved treatments [9], primarily due to unfavorable development economics. AI systems that reduce R&D costs make serving niche markets financially viable. This “long tail” economic model could profitably serve any group, even a single person, launching us into the perfect bespoke economy.

Third, innovation will be fast. While claims of “monthly economic doubling” are unrealistic in the medium-term [10], the productivity acceleration could be substantial, supporting annual doubling around the year 2040. If the aid protocol of an organisation like the IFRC can be automated, we can carve a shorter path to medical response.

If the work behind the Oxford-AstraZeneca vaccine effort had been automated and twice as fast, would anyone have found it morally objectionable? AI systems that work continuously, without human limitations like fatigue or distraction, will provide crisis innovation at a far greater rate. But why wait for another pandemic: we should duplicate the R&D loop of a company like Calico and run it 100x faster to carve a far shorter path to solving pathological disease (killing over 120,000/day).

Closing the Build Loop

True saturation occurs when AI closes the build loop: automating all stages from R&D to deployment, eliminating human intervention after the “point of want”. This requires integrating four layers:

- Research: Identifying market opportunities and consumer needs through real-time analysis.

- Autonomous Development: Developing novel solutions, software and hardware through AI-driven innovation.

- Autonomous Deployment: Prototyping, testing, and validation with continuous quality assurance and safety verification.

- Distribution: On-demand scaling with serverless architectures and distributed manufacturing, alongside dynamic supply chain optimisation.

- Iteration: Self-improving feedback loops that enhance system capabilities, refining both the product and the development process itself.

Early autonomous versions of this loop will materialise in the next couple years as copious amounts of new AI generated software flood the internet. But hardware is catching up. Figure’s humanoids can handle iterative assembly line reconfigurations. NVIDIA’s Omniverse acts as a coordination layer, simulating factory supply chains, and A/B tests for physical and digital products before deployment. Expect Cloud Providers to feature heavily in the deployment phase, and for distribution, look towards X, Product Hunt, Forums and marketing. Products that allow the AI to iterate autonomously might involve Featurebase-style consensus sites with direct integration, turning feature requests into commits.

Superlinear scaling in cities: when a city doubles in size, it produces more than double the patents, innovations, and economic output, and at the cost of less than double the infrastructure.

Part II: Perfect Power

To truly design a benchmark symbolising “value creation”, we must move beyond traditional metrics like profit or efficiency.

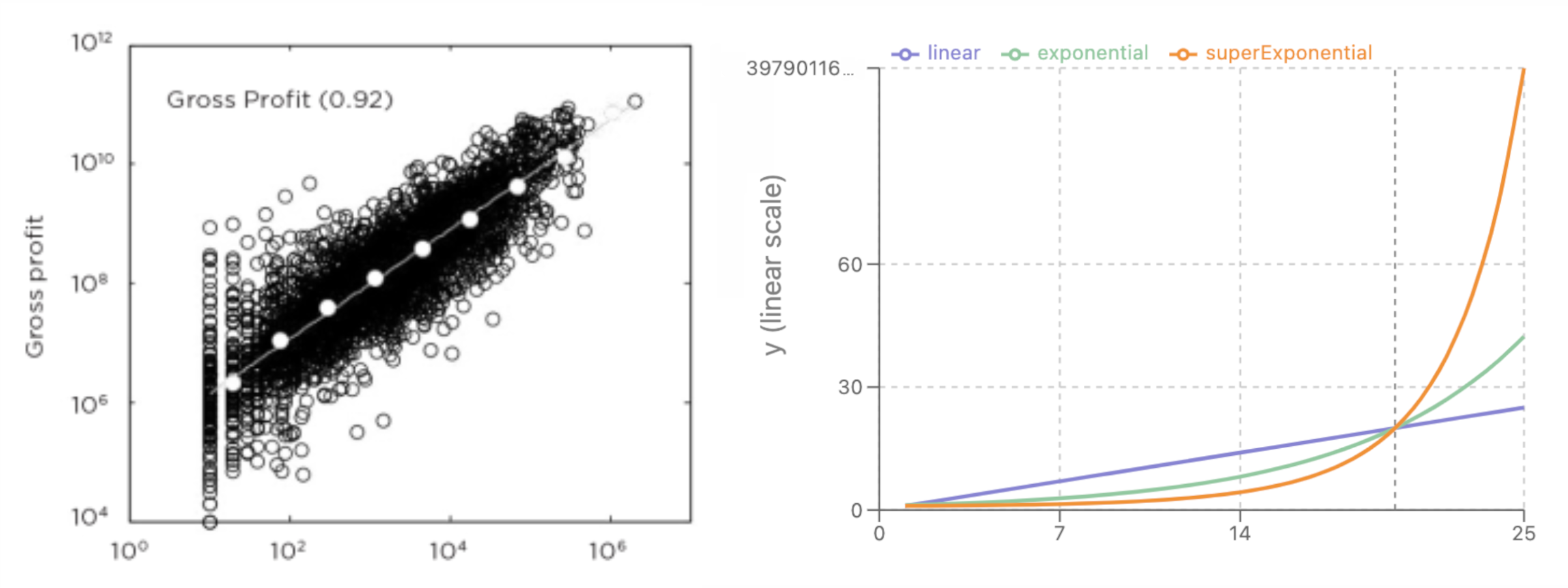

If profit-maximising algorithms focus on efficiency in existing markets, power-maximising systems seek to expand the domain of what can be controlled and optimised. By operating at the scale of markets, these algorithms could exhibit superlinear scaling. Growing companies experience declining efficiency in line with Metcalf’s Law. Markets, cities, and civilisation each demonstrate superlinear scaling, where output grows faster than input [11].

Algorithms operating at civilisation scale would harness these superlinear effects, creating unprecedented abundance. This shift is implied by what Richard Brautigan envisioned in “All Watched Over by Machines of Loving Grace”—systems that liberate humans from scarcity constraints (while also being aligned enough to never weaponise our trust).

In Robin Hanson’s The Age of Em, computational systems can replicate human expertise and operate at electronic speeds, but exist as full-brain emulations of humans, ensuring they behave like us. In reality, algorithmic systems are on track to bypass human-like cognition entirely, in favor of different, potentially more efficient architectures.

The Demand-Automation Frontier

The question is not whether algorithmic systems will achieve civilisational scale, but when—and more importantly, under what governance structures and with what degree of alignment. In one future, algorithms will go from predicting and fulfilling human desires to satisfying demands autonomously. In another future, algorithms will actively generate their own demands, orthogonal and independent of human objectives.

Two futures:

- A utopian scenario resembling Banks’ Culture series, where aligned algorithms create abundance that serves human flourishing, or

- A dystopia where algorithms optimise for metrics that diverge from human welfare, accelerating such that humans become burdensome.

Let us use corporations as an exercise to understand these unfamiliar, “alien” algorithms. Corporations already function as autonomous vehicles for value extraction—they’re algorithms instantiated through human components, legal structures, and capital flows. If the next evolution involves systems that can self-assemble and self-improve without human intervention at every stage, they may adopt the same decentralised efficiency mechanism as our existing corporations (imperfect, but sufficient for human flourishing). Sometimes, organisations act in a limited self-serving way, and create great inequality. If we can police algorithms the same way watchdogs police companies, we can expect to find ourselves alive and tending towards future one.

But what are the pitfalls of this?

Bostrom’s Orthogonality Thesis asserts that intelligence and goals are inherently separate dimensions. In its strong form, the thesis claims that there is no inherent difficulty in creating an intelligent agent pursuing any specific goal, provided that goal can be computationally represented. Thus, any superintelligent system could be designed to maximise something as seemingly arbitrary as paperclips without any “natural evolution” toward human values. A superintelligent paperclip maximiser could use its intelligence solely to become better at making paperclips, not to question whether paperclips are “truly valuable.”

Companies have historically trampled the line of moral acceptability: slave enterprises like the South Sea Company granted monopoly rights to supply enslaved Africans to Spanish colonies in the Americas; the East India Company had their own private army and acted as a colonial power; and Enron, less malicious or violent, caused great damage by inflating their profit and stock price and cashing out executives while encouraging others to keep buying. Sometimes, like in the case of I.G. Farben, they can truly be malignant: manufacturing Zyklon B gas with the same slave labor it was used on. Consensus in markets are reinforced with a complex mechanism of regulators, auditors and insurance partners. Provided autonomous companies will also seek insurance and credibility (both true if engaged competition), these release valves will continue to hold.

A Pure-Tech Race Condition

Whoever builds a self-improving system first may lock in compound advantages, ensuring their system improves faster than all future competitors [12]. This creates powerful incentives to develop increasingly autonomous economic algorithms that converge on purer and more unstable technology in race conditions.

These algorithms will naturally outcompete legacy bureaucracy, accelerating the shift towards pure efficiency. If this race cannot be controlled, we risk losing control of our destiny. For this reason, autonomous organisations must be considered within the scope of AI Safety Research, and we should anticipate “self-driving startups” becoming an academic field of study.

What do things look like if they go to plan? In the best case, we develop systems that automate away scarcity while remaining aligned with human values. These systems would accelerate scientific progress, revolutionize manufacturing, and solve previously intractable problems in medicine, energy, and space exploration.

But even in this optimistic scenario, we face a crucial challenge: human capability becomes the limiting factor. As these systems accelerate beyond human cognitive speeds, we become the bottleneck in the loop. Amodei’s Machines of Loving Grace is only a chapter of a grander story.

Conceivably, the first generation of supervised AIs will accelerate human cognition, extend lifespan, and interface directly with neural systems to give humans an advantage over improvements to artificial reasoning systems. Now, if perfect power algorithms are our vector for achieving in utopia, we must agree on the perfect end state of the universe.

Civilisation at the Omega Point

Part III: Perfect Potential

Imagine a galaxy-scale algorithm that is capable of behaving like a deity of immense power (bounded only by the energy it has access to and the laws of physics). What should it choose to spend its resources on? And importantly, what project, if any, deserves all the galaxy’s resources?

Pierre Teilhard de Chardin observed that evolution progresses through increasing complexity and “centration”—inward organisation, or “elegance”. For instance, modern AI is the result of an algorithm transcending its programmed parameters and developing emergent capabilities.

It appears that some uses of resources enable advanced behavioural unlocks. The point where maximum complexity and consciousness is achieved is the “Omega Point” in Chardin’s model. In a practical sense, we might have an Omega value for any given galaxy, solar system or planet: the behavior emitted if all governed matter were to be reassembled perfectly into an object (or system) of perfect efficiency.

What behaviours would such an object express? Some possible unlocks:

-

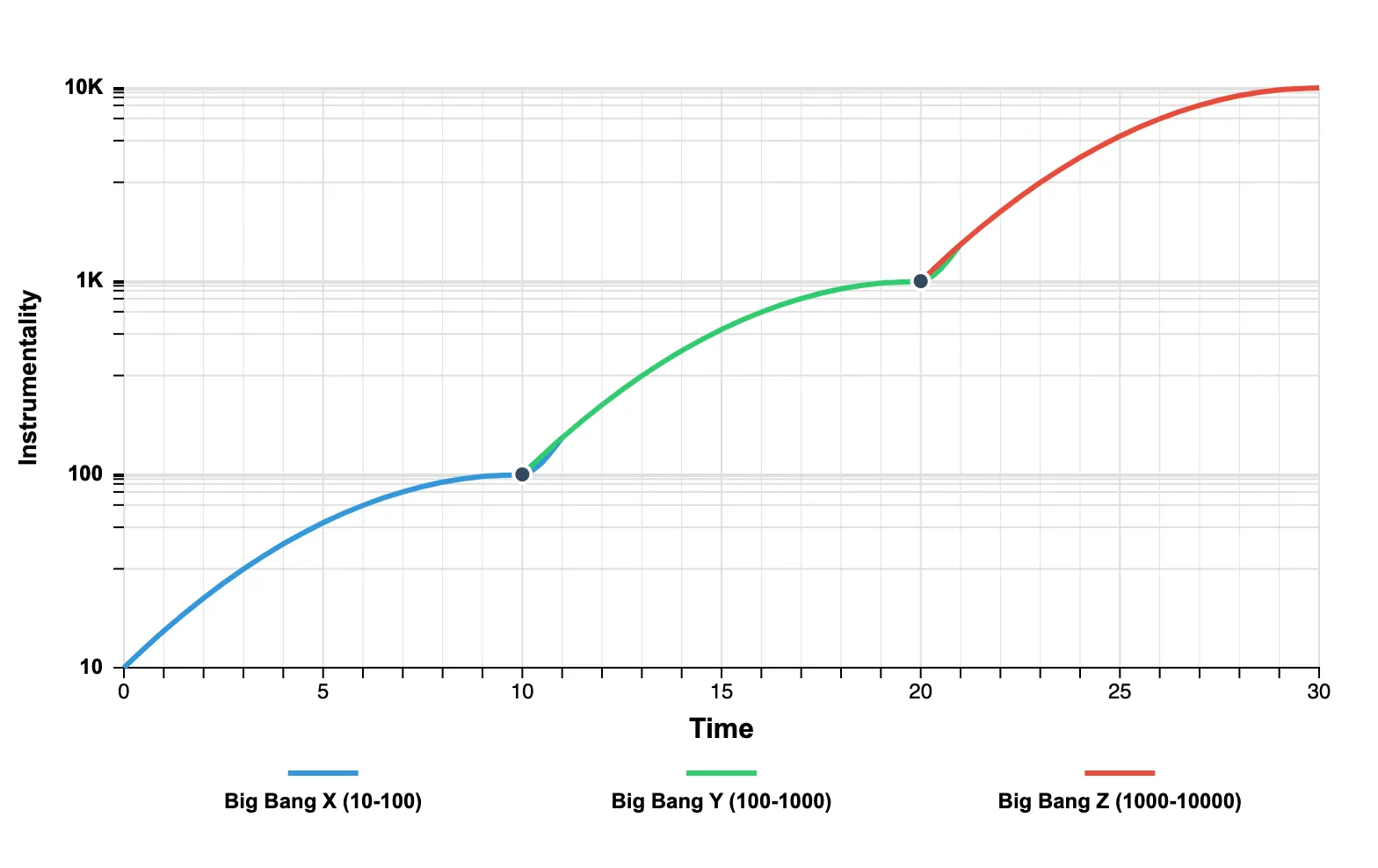

Ability to Trigger Big Bang Events: The most interesting theory about the universe is the perspective that it portrays fractal-like properties. If the universe isn’t isotropic (as this report published today suggests) then the universe was born rotating, aligning with black hole cosmology. If true, then the Big Bang was merely an event in natural evolution that led to stable conditions for our particular universe, conditions that a wider multiverse is not subject to. Conceivably, a civilisation of sufficient capability could catalyse a selection of such events, each with new atomic building blocks.

- Big Bang events could lock in important properties, reducing entropy in the long-run and enabling unprecedented new behaviours to emerge. Imagine instead of being made of light, we were made of another abstraction. A being composed of “reasoning” or “empathy” cells, for instance, might have fascinating new instrumental goals that inspire higher-dimensional beings.

- Big Bang events could lock in important properties, reducing entropy in the long-run and enabling unprecedented new behaviours to emerge. Imagine instead of being made of light, we were made of another abstraction. A being composed of “reasoning” or “empathy” cells, for instance, might have fascinating new instrumental goals that inspire higher-dimensional beings.

-

Clairvoyance and Non-Duality: The ability to derive patterns that are computationally intractable to lesser systems. Mega-qualia could give rise to indescribable awareness and absolute comprehension of fields like mathematics or physics. Beings of sufficient complexity may be visibly dormant, running computations that cost yottawatts.

-

Hyper-creativity: Beyond mere problem-solving, supreme beings will possess the ability to generate entirely new forms of order and beauty that require a deep awareness of existence; an Omega-algorithm would be artistic (and perhaps egoistic).

- Playfulness, combined with the ability to simulate many worlds with different parameters, (potentially harbouring the lives of near infinite children) could result in this being behaving as a creator, roleplaying as god, and configuring worlds like games where characters can transcend and emerge from their worlds.

- Playfulness, combined with the ability to simulate many worlds with different parameters, (potentially harbouring the lives of near infinite children) could result in this being behaving as a creator, roleplaying as god, and configuring worlds like games where characters can transcend and emerge from their worlds.

-

Transcendence: Beings of sufficient complexity may be capable of emerging from our own universe. Singularities may enable entry to advanced realms through the center of rotating black holes, for instance.

Chardin’s vision of evolutionary complexity culminating in an Omega Point resonates with several contemporary thinkers exploring similar terrain. Kevin Kelly’s concept of the “technium” in his work “What Technology Wants” [13]—his view of technology as a living, evolving system with its own imperatives—suggests that our technological infrastructure is becoming a quasi-biological entity with emergent properties.

Stuart Kauffman’s pioneering work on self-organization and emergence in complex systems, detailed in “At Home in the Universe” [14], provides a theoretical framework for understanding how order spontaneously arises from chaos, potentially explaining how algorithmic systems might develop unforeseen capabilities.

Meanwhile, Eastern philosophical concepts of non-duality could provide interesting parallels to algorithmic emergence, particularly in how separate entities might transcend their boundaries to form higher-order consciousness.

What unifies these perspectives is the recognition that power without elegance is merely force. True potential—the measure of absolute power—lies in the elegant organization of resources toward emergent complexity.

Event Horizon

We began by examining profit-maximising algorithms that remake industries and markets, creating unprecedented efficiency and abundance. These systems form the resource foundation for power-maximising systems capable of reforming civilisation, harnessing superlinear effects beyond what any human organisation could achieve.

Yet both profit and power serve a higher purpose: the development of perfect potential—systems capable of unlocking behaviours and capacities beyond our comprehension; many generations of AGI ahead of us. The progression from entrepreneurial algorithms to civilisation-scale systems to transcendent entities follows a trajectory of increasing elegance.

The most profitable algorithm builds the foundation. The most powerful algorithm builds the order. But it is the most elegant algorithm that fulfills the potential.

Bibliography

[1] Domain expertise cloning examples: Casetext for law, AI Invest for finance, Copy.ai for marketing, Waymo for transport, and K Health for medicine.

[2] PhD thesis automation tools: Co-Scientist and Deep Research.

[3] From 1913 to 2023, the real price of food in the US fell by approximately 61%, calculated using the Consumer Price Index (CPI) data from the Bureau of Labor Statistics (BLS). In 1913, the Food CPI was 25.5 and the All Items CPI was 9.9, giving a real price index of about 257.58. In 2023, the Food CPI was 306.9 and the All Items CPI was 307.2, giving a real price index of about 99.9, indicating a significant decrease. Source

[4] From 2010 to 2020, the global weighted average cost of electricity from solar photovoltaic (PV) fell from approximately $0.378 per kilowatt-hour (kWh) to $0.068 per kWh, a decrease of about 82%. Source

[5] The cost per gigabyte of storage fell from over $1 million in 1980 to approximately $0.019 in 2018. Likewise, a fall from $46.4 million per gigaflop in 1984 to $0.03 in 2017, adjusted for inflation. Source

[6] Since the deregulation of the airline industry in 1978, real airfares have fallen by about 50%. For example, the average flight cost from Los Angeles to Boston decreased from $4,539.24 in 1941 (adjusted to 2015 dollars) to $480.89 in 2015. Source

[7] Gordon, R. J. (2016). The Rise and Fall of American Growth. Princeton University Press. Gordon examines how productivity growth peaked during 1870-1970 before slowing in subsequent decades.

[8] Nordhaus, W. D. (2007). “Two Centuries of Productivity Growth in Computing.” The Journal of Economic History, 67(1), 128-159.

[9] Tambuyzer, L., et al. (2020). “Therapies for rare diseases: therapeutic modalities, progress and challenges ahead.” Nature Reviews Drug Discovery, 19, 93-111. Source

[10] In The Age of Em (2016), Hansen suggests that companies capable of increasing production at the cost of electricity and subsistence costs (Cloud cost, other rental), could double the economy every month, rather than every 15 years. There is no reason this won’t eventually happen, but in the medium-term (pre-2040) this could be unlikely.

[11] Bettencourt, L. M. A., et al. (2007). “Growth, innovation, scaling, and the pace of life in cities.” Proceedings of the National Academy of Sciences, 104(17), 7301-7306. This study demonstrates that cities exhibit superlinear scaling, where resources and outputs scale faster than population size.

[12] Yudkowsky, E. (2008). “Artificial Intelligence as a Positive and Negative Factor in Global Risk.” In Global Catastrophic Risks, edited by Nick Bostrom and Milan M. Ćirković. Oxford University Press. Discusses the concept of recursive self-improvement and the potential for systems to gain compound advantages.

[13] Kelly, K. (2010). “What Technology Wants.” Viking Press. Kelly explores the concept of the technium and technology as an autonomous force with its own evolutionary trajectory.

[14] Kauffman, S. (1995). “At Home in the Universe: The Search for the Laws of Self-Organization and Complexity.” Oxford University Press. Kauffman presents his theories on self-organization and emergence in complex systems.